Building a Smarter Community Search: A Guide to Hybrid Retrieval and Model-Based Evaluation

Overview

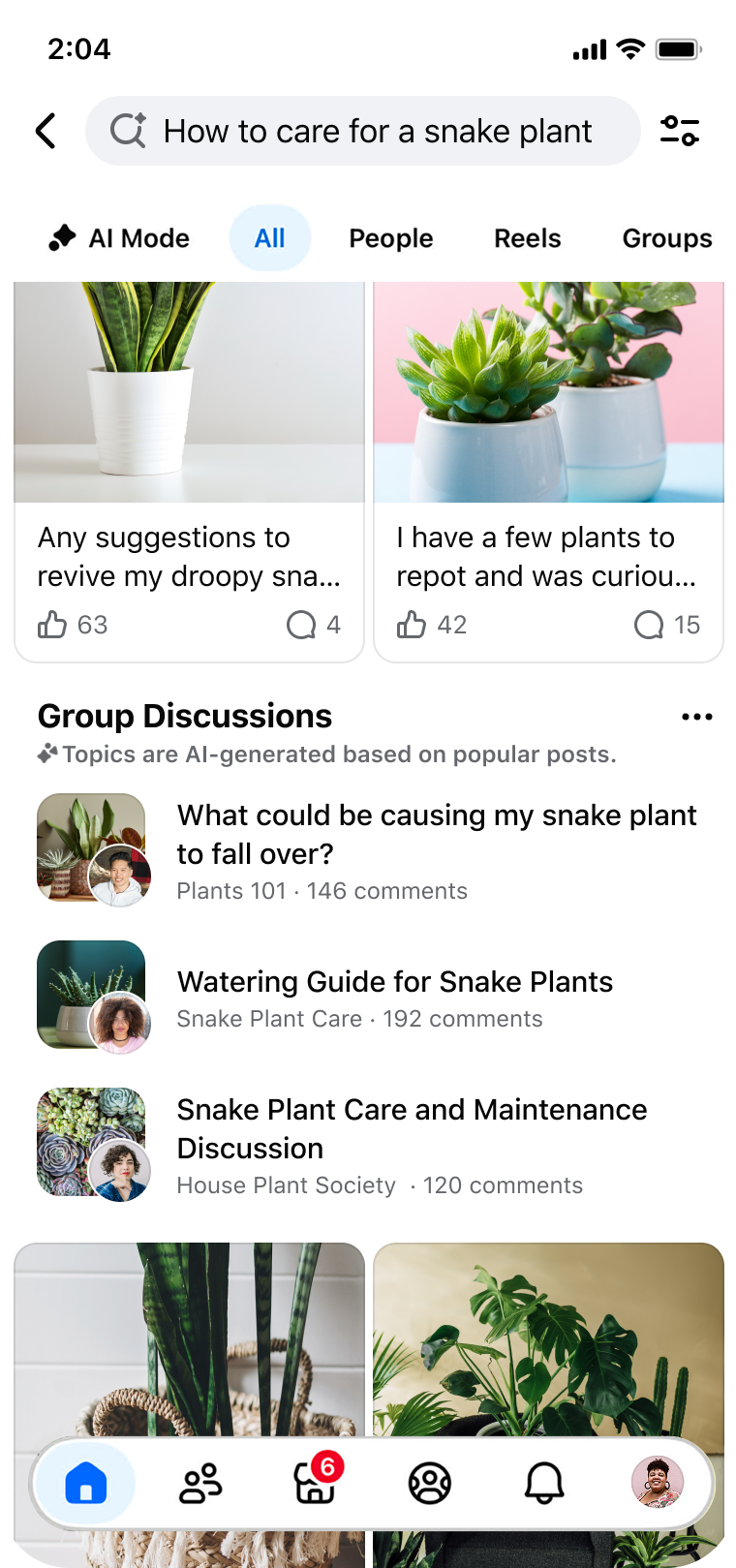

Searching within online communities often feels like sifting through a haystack of conversations to find a single needle of relevant advice. Facebook Groups, with billions of users, face three core friction points: discovery (finding content when your words don't match the community's language), consumption (the effort needed to piece together answers from long threads), and validation (confirming decisions using scattered expert opinions). This guide walks you through the architectural transformation that addressed these challenges—moving from basic keyword matching to a hybrid retrieval architecture combined with automated model-based evaluation. You'll learn how to design, implement, and evaluate a search system that understands user intent, reduces effort, and unlocks the collective wisdom of groups.

Prerequisites

Before diving into the technical details, ensure you have a foundational understanding of:

- Information retrieval basics (e.g., TF-IDF, BM25, vector space models)

- Natural language processing (NLP) concepts like word embeddings and transformer models

- Basic machine learning evaluation (precision, recall, NDCG)

- Programming experience with Python (pseudo-code examples are provided)

- Familiarity with search system metrics (e.g., click-through rate, user satisfaction surveys)

Step-by-Step Implementation

Step 1: Identify Your Friction Points

Every community search system has unique pain points. In Facebook's case, the three major issues were discovery, consumption, and validation. To start, map out similar friction points in your own context. For example, survey users or analyze search logs for queries that return zero results despite relevant content existing. Back to top

Step 2: Move from Keyword Matching to Hybrid Retrieval

Traditional lexical search (exact word matching) fails when intent and phrasing differ. The solution is a hybrid approach that combines:

- Lexical retrieval (e.g., BM25) for exact terms and high-precision matches.

- Semantic retrieval using dense embeddings (e.g., from a BERT-like model) to capture meaning and synonyms.

Here's a pseudo-code example of hybrid scoring:

def hybrid_score(query, document, lexical_weight=0.5):

bm25_score = compute_bm25(query, document)

emb_score = cosine_similarity(embed(query), embed(document))

# Normalize scores to same range (e.g., 0-1)

normalized_bm25 = normalize(bm25_score, lexical_range)

normalized_emb = normalize(emb_score, embedding_range)

return lexical_weight * normalized_bm25 + (1-lexical_weight) * normalized_emb

This simple linear combination can be tuned per use case. Deploy this as a retrieval layer that fetches candidates from both indexes, then ranks them using the hybrid score.

Step 3: Implement Automated Model-Based Evaluation

Manual evaluation of search results doesn't scale. Instead, use a separate model to judge relevance automatically. For instance, you can fine-tune a BERT-based classifier on labeled query-document pairs (e.g., from human raters). Then, during development, you run the model on held-out test queries to compute metrics like Normalized Discounted Cumulative Gain (NDCG) or Mean Reciprocal Rank (MRR).

Example evaluation pipeline:

def evaluate(relevance_model, test_queries, retrieval_system):

ndcg_scores = []

for query in test_queries:

results = retrieval_system.search(query, top_k=10)

predicted_relevances = [relevance_model.predict(query, doc) for doc in results]

true_relevances = get_ground_truth(query)

ndcg_scores.append(ndcg_at_k(true_relevances, predicted_relevances, k=10))

return np.mean(ndcg_scores)

Automating evaluation lets you iterate quickly and catch regressions before deployment.

Step 4: Deploy and Monitor

Roll out the new search in phases. Use A/B testing to compare hybrid retrieval against the old lexical system. Key metrics to track include:

- Search engagement (e.g., click-through rate, time spent on results)

- Relevance metrics (automated NDCG on live queries)

- Error rates (e.g., crashes, timeouts)

- User feedback (thumbs up/down, survey responses)

In Facebook's deployment, improvements in engagement and relevance were observed with no increase in error rates—highlighting the robustness of the hybrid architecture.

Common Mistakes

Over-reliance on Lexical Matching

Sticking with pure keyword search ignores semantic gaps. Even with good synonyms, you'll miss paraphrases and related concepts. Always incorporate some semantic signal.

Ignoring the Effort Tax

Even if your system retrieves the right content, users may still need to read many comments. Consider summarization or highlight extraction as a next step. Previous section

Poor Normalization of Scores

When combining lexical and semantic scores, ensure they are on comparable scales. Otherwise, one component may dominate. Use min-max normalization or learn weights via cross-validation.

Lack of Automated Evaluation

Manual evaluation is slow, expensive, and inconsistent. Without automated model-based evaluation, you risk deploying a system that performs worse on unseen queries. Invest in a robust evaluation model.

Not Validating with Real-World Queries

Synthetic test sets may not reflect actual user intent. Analyze search logs to extract common queries and use them for evaluation. Otherwise, you may optimize for the wrong objective.

Summary

Transforming community search from basic keyword matching to a hybrid retrieval system with automated evaluation solves the core friction points of discovery, consumption, and validation. By combining lexical and semantic signals, you capture both exact terms and user intent. Automated model-based evaluation enables fast, reliable iteration. The steps outlined—identifying friction, implementing hybrid retrieval, building an evaluation model, and careful deployment—provide a repeatable path to unlock the power of community knowledge. The result is a search experience that feels intuitive, reduces user effort, and surfaces the collective wisdom hidden in group conversations.